Summoning Ghosts, Not Building Animals: The Soul of Work in the Age of AI

I have already written two blogs on this topic, and this perhaps will be my last blog for the same.

I think most people already know that LLMs are nothing but complex mathematical numbers which form specific relations when prompted in a specific direction. As a quote from the Dwarkesh Patel Podcast put it perfectly: "We're summoning ghosts, not building animals." These models don't think. They don't feel. They echo patterns at extraordinary scale. And that distinction matters more than most people realize, because it raises an uncomfortable question: what kind of work has a soul, and what kind doesn't?

What do I mean by a job having a soul? If the effort is novel and unique in its creative exploration, then the job has a soul. If it's merely repeating a known pattern with minor variation, it doesn't. Keep that distinction in mind. Everything that follows hinges on it.

The Unusual Trend: More People Have Started Writing

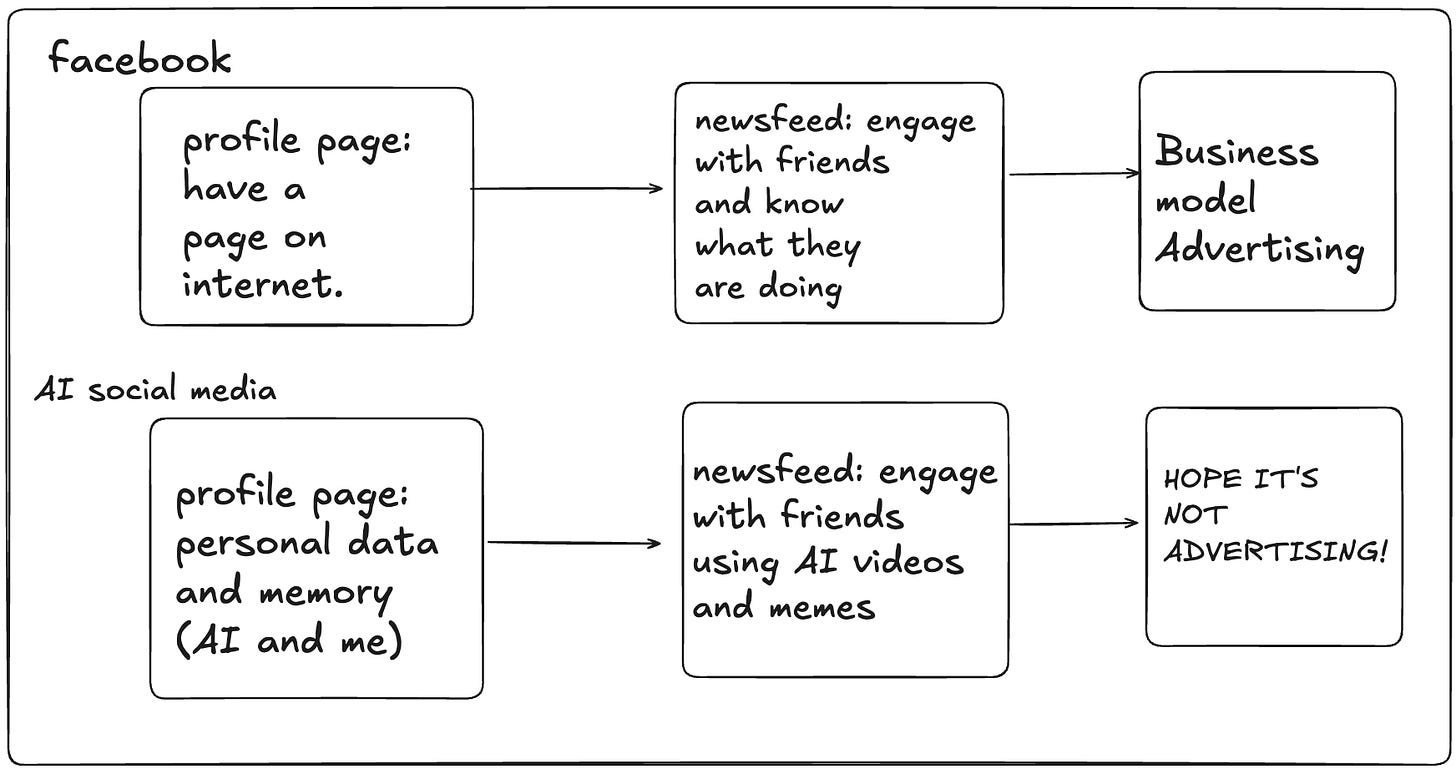

Claude today can write great articles and yet more people feel like writing is a much more important skill than it used to be. You are right to feel that way, because writing prompts is creating more tangible outcomes. Original, fresh ideas suddenly feel a lot more valuable than they used to be. When anyone can generate polished prose in seconds, the bottleneck shifts upstream to the person who has something worth saying.

The model still holds wealth greater than 10 trillion dollars, but with the right entrepreneur it won't be model companies that take home the pies but new companies built on top of them. In the next 10 years, none of the top AI companies we see today in the top 10 will remain so. The platform always shifts. The builders who ride it change with every wave.

Overall, repeating a complex system earlier had an economic value, which is why the edtech system stood strong upon that foundation. Students paid to learn what others already knew, and teachers were paid to transmit it. Now a lot of that is automated. The transmission of known knowledge is no longer a defensible business. What remains defensible is the creation of new knowledge, and the wisdom to know where to apply it.

The Case Against Software Engineers

This isn't hypothetical anymore. Block, Amazon, and several other major corporations have laid off significant percentages of their engineering staff, citing AI as a direct reason. These aren't bluffs or restructuring euphemisms. The economics have genuinely changed.

Coding is being automated at a breathtaking pace. Tools like Claude Code, given the right instructions, allow massive innovation cycles where product managers, architects, and other roles simply write good prompts and think deeply about the problem. The actual gritty work of programming, the syntax, the boilerplate, the debugging of edge cases at 2 AM, is increasingly being done by these models. This makes a certain kind of software engineer obsolete: the one whose primary value was translating known specifications into known code patterns. If your job was to be the human compiler between a product requirement document and a pull request, the ghost can do that now.

The Case For Software Engineers

The a16z crowd will point you to Jevons Paradox, which basically means that when supply is increased and made cheaper, demand increases, thus increasing the need for software engineers overall. It's an elegant argument. Cheaper code means more software gets built, which means more engineers are needed to build it.

But we have not seen clear evidence of that playing out so far. It has been three years since Cursor launched. The hiring numbers haven't surged. If anything, engineering teams are getting leaner while shipping more.

Still, the other half of the argument holds weight. Software still drives productivity, and there are many physical, discrete systems that can benefit from intelligent software and agents working on them. "Software will eat the world" remains a popular saying because it describes something real. Software streamlines processes that can scale in ways physical labor cannot. We have seen this play out time and again, from logistics to finance to healthcare. The world is full of messy, analog systems waiting to be made legible by code. That work isn't going away. If anything, AI makes it more accessible.

My Point of View

The role of AI should be to increase efficiency by making markets commoditized to a large extent. And that commoditization creates a new kind of demand. We need a lot of AI engineers to ensure that the pipeline for sales, content, and the most priced asset on the internet (attention) is being managed by the right builders. These engineers would be able to understand physical, discrete systems well and be able to apply intelligence, the AI models, everywhere. Not just in software, but in supply chains, in energy grids, in agriculture, in the thousand unglamorous industries that haven't yet been touched by a single line of automated code.

Companies soon might stop frowning over cost savings and will begin to focus on increasing revenue. And it will be AI engineers that will promise and deliver on the same. Cost-cutting has a floor. Revenue growth does not. The companies that figure this out first will hire aggressively while their competitors are still celebrating how many engineers they managed to let go.

My contrarian opinion: hire as many software engineers as you can. Not the ones who write code that a model can write. The ones who understand systems, who see where intelligence can be inserted into the physical world, who can architect what doesn't yet exist. Hire the ones with soul in their work.

The Soul Test

Science and storytelling require the most soul. They demand novelty. They punish repetition. A scientist who merely reproduces known results is not doing science. A storyteller who recycles familiar plots without genuine insight is not telling a story worth hearing. These disciplines, by their nature, resist automation, not because the tools can't mimic their outputs, but because their value lies precisely in the parts that haven't been done before.

Repetitive engineering requires the least soul. If the job you work on has been done a million times before and you are not creatively contributing anything new to it on a regular basis, then yes, you are competing directly with a ghost. And ghosts work for fractions of a penny, never sleep, and never complain.

My Prediction

Here is where I'll leave this, my final word on the topic.

The next decade will not be defined by AI replacing humans. It will be defined by a great sorting, a separation of soulful work from soulless work, cutting across every profession, every industry, every title. Some surgeons will be replaceable. Some plumbers won't be. Some PhDs will find their entire research agenda automated overnight. Some high school dropouts building strange, novel things in their garage will become indispensable.

The question you should be asking yourself is not "Will AI take my job?" The question is: "Does my job have a soul?" If what you do each day involves genuine creative exploration, deep problem-solving in novel contexts, or the kind of human judgment that emerges only from lived experience in the physical world, you are not competing with ghosts. You are the one summoning them.

And if your work is repetition dressed in complexity? The ghost is already learning your name.